The main focus of our Security Operations Center is on threat detection, whether those threats are advanced or commodity - ‘advanced’ threats still tend to incorporate a combination of widely known tools and techniques. Yet we noticed that since the appearance of Living-off-the-Land (LotL) attacks in 2013, tools-based detection is not a particularly efficient methodology. Many legitimate tools can also be used for malicious purposes, and this can only be detected through behavior analysis. Modern operating systems actually contain everything needed to attack them, without having to resort to malicious tools (see GTFOBins, LOLBAS). This ‘dual functionality’ of OS built-in tools is what sysadmins work with, so distinguishing their activities from those of a threat actor is very difficult, and virtually impossible through automation alone.

The widely publicized Machine Learning/Deep Learning method is not a great deal of help here either, as the advanced attacker will use the same tools, from the same workstations, towards the same systems, and at the same time intervals as a real sysadmin; with no anomalies, no outliers - nothing. Faced with this, only a human being can perform the correct triage, attributing observed activity as malicious or legitimate, or even doing something as simple as asking the IT staff if they really performed these actions.

This is where the human SOC analyst is absolutely irreplaceable, and where Threat Hunting – the practice of iteratively searching through data collected from endpoint sensors in order to detect threats that successfully evade automatic security solutions – comes in.

The Kaspersky SOC continuously monitors more than 250k endpoints worldwide, and this number is constantly growing. From each of these sensors, a huge amount of EDR telemetry is received and processed. While more than 90% of threats are detected and prevented automatically, and only around 10% go to human validation, the amount of raw telemetry requiring additional review is still enormous, and analyzing all this manually to provide threat hunting to customers in the form of operational service would be impossible. The answer is to single out for further review by the SOC analyst those raw events which are in some way related to known (or just theoretically possible) malicious activity.

In our SOC, we call these types of event ‘hunts’, as they help to automate the Threat Hunting process. These same ‘hunts’ are used in Kaspersky EDR, where they’re officially known as ‘Indicators of Attack’ or IoAs, and we will refer to them as IoAs from now on in this article. IoA creation is an art, and like most art forms there’s more to it than just systematic performance. Questions need to be asked and answered, like ‘Which techniques need detecting as a priority, and which can wait a little?’ and ‘Which techniques is a real attacker most likely to use’?

This is where a knowledge of adversary methods available as a convenient online resource is of so much value. The MITRE ATT&CK matrix reveals the TTPs – Tactics, Techniques and Procedures – of threat actors and their activities. It doesn’t just provide descriptions of adversary techniques: it also lists particular threat actors who put them to use. This makes ATT&CK a very practical resource: these techniques are in use and the related threats are actual, not theoretical.

Four vectors of the ATT&CK in your SOC

ATT&CK can be used in a number of ways for TTP-based detection prioritization and IoA description. The first approach, which we’ll call ‘threat-driven’, involves assessing those threats employing particular techniques, understanding their relevance to the protected infrastructures, then planning the development of related IoAs. In simple terms, ATT&CK techniques can be categorized by threat actors using them, ‘the most popular’ techniques can be taken into the IoA development process first, and we can then work our way down the list.

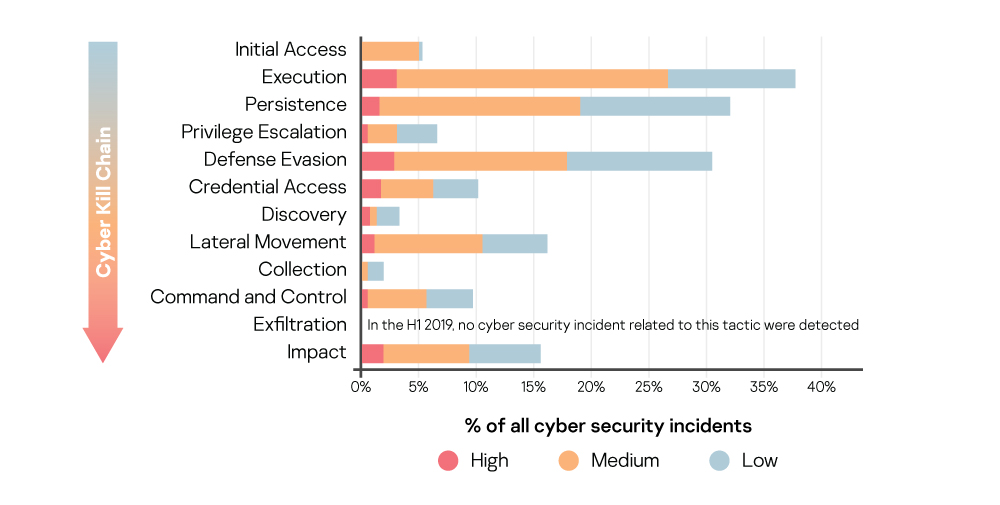

The second approach is ‘capabilities-driven’. The idea behind this is that the SOC assesses its own detection capabilities at different stages of the attack kill-chain or, in terms of MITRE ATT&CK, the Tactics. In practice, this assessment process can be further extended to include best confidence levels for detects (i.e. alerts with the lowest rates of false positives), the level of impact (ensuring that the highest impact event is dealt with first), ease of investigation or the average time for alert triage (for example, an additional level of visibility provided by other IT and/or security solutions being in place), or any combination of these factors, including classic risk assessment and business impact analysis.

In mature environments, where endpoints and the network are secured by appropriate technologies (such as EDR and NFT), and there are additional tools for more comprehensive threat detection (such as a sandbox), the order of tactics for TTP-based detection might be: Persistence – Privilege Escalation – Defense Evasion – Credentials Access – Lateral Movement – Exfiltration - Command and Control.

So, having dealt with IoA development priorities, let’s move on to another ATT&CK use case in security operations – detect classification. Systematic engagement in threat research results in a number of different IoAs. If that number is a few dozen – fine. But if there are several hundred, it’s going to be impossible to remember all of them, particularly if they’re written by a whole team of researchers rather than just one. And there’s the question of whether a brand new IoA is really needed, or if the detection concept could be implemented as part of an existing IoA.

If all IoAs are mapped to ATT&CK tactics and techniques and a rule is made that each IoA can address only one ATT&CK technique (but each technique can be covered by several IoAs), this makes things much easier. Researchers don’t need to review hundreds and thousands of existing IoAs to see where theirs fits in, but can just focus on the particular technique –helping to greatly narrow down the preliminary analysis of existing detects when researching new ones. IoA to ATT&CK mapping also means that detection gaps are clearly visible: automatic calculation and visualization of the ATT&CK coverage achieved by IoAs is very helpful in research planning.

Last but not least - MITRE ATT&CK is a good knowledgebase for SOC analysts. Let’s assume that each IoA generates an appropriate alert that goes to the SOC analyst’s queue for investigation. When all IoAs are mapped to ATT&CK techniques, and alerts are enriched with information from the MITRE matrix, the SOC analyst already has a considerable amount of potentially valuable contextual information to hand. And the fact that MITRE collects all this information so conveniently into one place effectively simplifies the onboarding process for newcomers to SOC analysis.

The ATT&CK limits

As with everything in life, there are some constraints in MITRE methodology. The ‘Procedure’ element of the TTP acronym is very important, because ‘techniques’ are pretty high-level and, in practice, IoAs are developed for particular 'procedures'. Unfortunately, not all procedures can be effectively detected, perhaps due to false positives accruing, or to technological and/or performance limitations. And there’s always a chance that a new implementation of an attack technique may appear in future. That’s why claims by any vendor that they can demonstrate extensive MITRE ATT&CK coverage mean nothing without an understanding of the actual procedures tested. There may be very many implementations of each technique and, of course, no vendor can cover them all, not least because not all of them are as yet known.

MITRE ATT&CK’s own evaluations of the vendor’s solution provides a more objective assessment of its capabilities, because during the evaluation process particular procedures for each technique are tested and test conditions are strictly identical for each participant. However, even here, shortcomings which prevent the tests from truly emulating real-world conditions, should be noted.

In real-world environments, neither EPP nor EDR alone is enough; they need to work in combination. More than 90% of threats are prevented by EPP and, as the next layer, EDR provides greater visibility for security analysts and incident responders. In addition, because EPP is capable of going deep into analyzed objects (i.e. files, system and process memory) and operating system internals, it can provide valuable telemetry and additional EDR event enrichment. Where EPP and EDR work together and EPP functionality is reused by EDR, the deactivation of EPP components would greatly influence the detection capabilities of the EDR layer.

ATT&CK Evaluations of Round 1 and Round 2 tested detection capabilities only, and there was a strict requirement that all prevention mechanisms had have to be deactivated. But deactivating EPP functionality also deactivates some EDR telemetry, resulting in reduced visibility. For example, EPP today generally incorporates a network IPS and firewall (FW), and the assumption is that this IPS/FW component is responsible for the network connection EDR telemetry. Switching off the IPS/FW component to comply with non-prevention requirements may deactivate network connection telemetry and impact the EDR’s ability to detect some attack sceneries. In real-world environments, of course, prevention would not be intentionally deactivated.

A second consideration is less critical, but also worth mentioning - MITRE assesses the extent to which the vendor’s detects are in compliance with the MITRE ATT&CK framework. Of course, this is understandable, because the evaluations are driven by MITRE, and so the assessor anticipates detections relevant to MITRE classification. But in practice, whether the solution provides alerts linked to TTPs listed in ATT&CK is a lot less important than the presence of the alerts and the information that simplify investigation and reduce response time.

In fact, some ATT&CK techniques may not even need detecting, because they don’t in practice differ from legitimate activity and so could produce unacceptable levels of false positives. Examples of such techniques are T1022: Data Encrypted, T1032: Standard Cryptographic Protocol, or T1061: Graphical User Interface, and many others. If techniques like these are not covered by IoAs in a security solution or not shown as alerts in the SOC analyst’s console, that doesn’t necessarily mean the solution has insufficient detection capabilities.

Finally, ATT&CK evaluation assesses only one attack scenario, realized by particular threat actor - just a small subset of all ATT&CK database TTPs. The fact that a security solution detects this particular scenario or not doesn’t mean it would perform identically against other, not tested, TTPs.

Conclusion

Targeted attacks are a reality and every enterprise should practice efficient detection. For that, enterprise security operations should have Threat Hunting as an internal SOC process, whether by an in-house monitoring team or as an outsourced service, and should be ready to detect threats beyond relying on alerts from automatic detection and prevention tools. The MITRE ATT&CK framework provides a systematic approach to Threat Hunting, which it makes sense for both beginner and more mature SOC teams to adopt. However, even extensive coverage of the ATT&CK matrix by solution detects does not guarantee outstanding efficiency in practice, and enterprise threat hunting teams still have plenty to do even if no alerts appear. Never stop hunting – because silence is a scary sound.