When you visit a website, you can open your computer to a lot more danger than you might think. All sites load their own content, some load ads served by an ad network, some load content served by other sites, and some load services hosted by other sites. Often, you’re receiving a pretty motley assortment of visible and invisible code.

Sounds like something you need to worry about only on shady or small sites, right? Wrong: A recent analysis by Menlo Security of the world’s most-visited websites shows nearly half still leave visitors open to vulnerable software, too much active content, and large amounts of code execution — in other words, a lot of potential danger. Ultimately, the researchers deemed 42% of the Alexa Top 100,000 “risky.”

Sites trusting other sites

The reasons also included a bunch of things users can’t control at all — unpatched server software, previous known malware infestation, a past security breach, and the like. Beyond the visited site, the findings revealed that each site calls an average of 25 background sites to fetch various types of content.

That means that when you’re visiting a website you presumably trust, you’re actually dealing with dozens of sites, most of which you never even heard of.

The active content risks had a pretty wide range, but even the best hovered around 20%. That’s one in five top sites — bad odds for the site visitor trying to get away clean. By the way, in addition to videos and other related items, “active content” also includes lots of the stuff that makes a website more appealing and useful to visitors, such as dynamically updated, personalized information on weather, news, stocks, and so forth. It may appear courtesy of JavaScript and Flash, too — programming often justifiably vilified for its vulnerabilities, a problem compounded by site owners’ failure to update.

Websites serving content from other sources introduces a degree of risk, but that risk became much more significant once cybercriminals realized they could actually target those sources and make them distribute malware. Your favorite news site might be upright and security-minded, but are all of its providers?

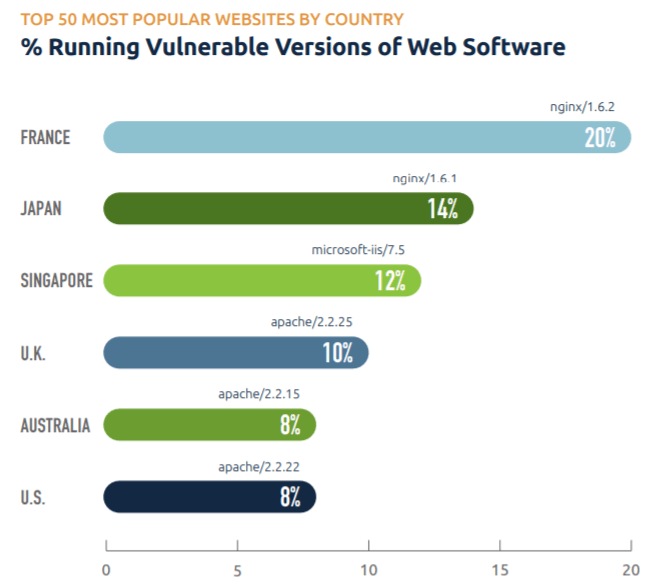

Vulnerable Web software

The report also states that many of the world’s most popular websites don’t have to worry about their partners letting them down; they take care of that part just fine — by using outdated servers. Some hadn’t been updated in years or even decades. Such sites are extremely vulnerable to malware and breaches, which in turn puts their visitors at risk.

If last year’s WannaCry outbreak taught the world anything, it’s that updating software in time is important. Or did it?

Stay safe

Ultimately, you cannot trust a website just because it’s popular, or slick, or well-established. At the same time, you can’t compel site owners and administrators to look out for their visitors, so stay alert, disable Flash in your browsers and maybe JavaScript too if you’re extremely cautious — however, some websites won’t be working without JavaScript. Better yet, install a strong security solution and set it to update itself automatically. Kaspersky Internet Security keeps you safe by checking the websites you visit, scanning the files you download, and applying world-leading detection and protection against anything a rogue website (or its content servers) might try to foist on you.

websites

websites

Tips

Tips